Data Engineering Services

Brainstack Technologies can help you with your data needs by helping you make sense of your data so you can make smarter decisions. On the data analytics side, we can help you spot trends, identify improvement areas, and see what's working and what's not.

If you need help organizing and preparing your data for analysis, our data engineering team can help. We can build custom data pipelines to collect information from all your different systems, clean it up, and store it in a way that makes it easy to analyze. So, if you're ready to level up your data game, let's chat.

Challenges in Data Analysis

At Brainstack, we deliver tailored software solutions across diverse industries. Our expertise in key domains enables us to understand unique business challenges and provide innovative technology-driven solutions that drive success.

Our Analytics Expertise

01Data Pipelines

Data pipelines unlock the value of your data. We help you collect from all your systems, clean it, and store it for easy analysis.

Our team uses Apache Kafka, Apache Iceberg, Apache Airflow, and more.

We help organizations turn data into revenue:

- Monetize data through products, insights, or APIs

- Build data products that generate revenue

- Create data-driven insights that drive growth

02Data Quality

Data Quality can be a show-stopper for your business, messing up everything. You can't trust your reports, your decisions are off, and you waste time and money. That's where we can help as data specialists.

Our Data Engineering team works like detectives, uncovering errors, inconsistencies, and redundancies in data. We clean it up and ensure it's accurate so you can rely on it.

Ready to give your data the TLC it deserves?

Let's Chat03Data Observability

So you've managed to get all this data you need, but the story doesn't end here. To proceed, you need data observability applications and tools. These act like X-ray vision for your data pipelines—we can see what's flowing where, spot any blockages, and ensure everything runs smoothly.

Our expertise is building observability systems that give you a clear picture of your data health. Think of it as a health checkup for your data. We don't just tell you what's wrong; we pinpoint the root cause and help you fix it fast.

Contact Us Today

04Business Intelligence

Business Intelligence (BI) empowers organizations to transform raw data into actionable insights.

However, the foundation for effective BI lies in robust data engineering services. Brainstack can play a crucial role in building the necessary infrastructure to capture, transform, and store data in a way that is accessible and usable for analysis.

Data Engineering Workflow

Our data engineering workflow builds scalable, secure, and high-performance data infrastructure from discovery to deployment.

Data Discovery & Assessment

We assess your current data landscape, identifying data sources, pain points, and business objectives. This helps define the architecture, quality needs, compliance requirements, and pipeline objectives.

Architecture & Pipeline Design

We design a robust data architecture and ETL/ELT pipelines to support batch and real-time processing, including selecting cloud platforms, storage layers, and modeling strategies.

Pipeline Development & Transformation

We develop data ingestion pipelines and apply transformation logic using technologies like Apache Spark, Kafka, Airflow, and DBT. Data is cleaned, validated, enriched, and structured for analytics.

Data Integration & Storage

We integrate structured and unstructured data from multiple sources into unified data lakes or warehouses like Snowflake, BigQuery, or Redshift, ensuring data availability and consistency.

Validation & Data Quality Assurance

We ensure data quality through automated testing, data profiling, and validation rules. We monitor for schema changes, data anomalies, and pipeline failures for trustworthy analytics.

Deployment, Monitoring & Optimization

We deploy pipelines using CI/CD and implement observability tools like Grafana or DataDog. Continuous monitoring, cost optimization, and pipeline tuning ensure consistent performance.

Adapting to Change

From raw data to trusted insight — delivered incrementally, not as a big-bang migration.

Highest-Value Data Sources First

Initial sprints deliver pipelines for the sources that drive the most decisions. Additional sources are layered in iteratively without disrupting production reporting.

Ready to Unlock the Value in Your Data?

Tell us about your data challenges and get a free, no-obligation assessment from our data engineering team.

Domains We Serve

Here are the most common industries for this offering.

Financial Services

Data analytics platforms, portfolio reporting dashboards, and automated compliance systems for asset managers. Real-time data pipelines, secure API integrations with banking middleware, and regulatory reporting modules tailored to regional requirements.

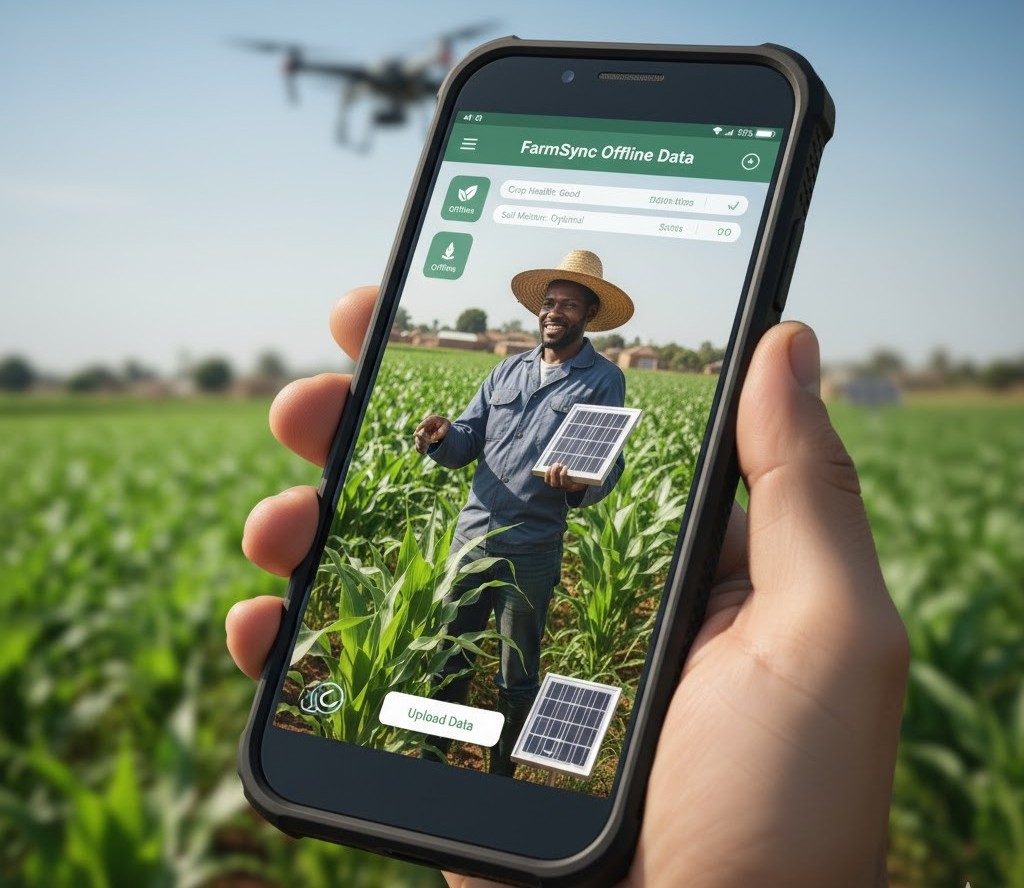

AgriTech & Sustainability

Offline-capable field data collection platforms and supply chain compliance tools deployed across East Africa, South America, and South Asia. PWAs with local data sync, SMS fallback, and voice interfaces. EUDR compliance workflows, traceability mapping, and certification body integration.

Telecom & IoT

Connected device platforms with data ingestion pipelines for high-volume telemetry. Device management portals, real-time operational dashboards, and MQTT/CoAP integration for industrial and agricultural sensor networks.

Governance & Compliance

Regulatory compliance platforms, governance assessment tools, and audit management systems. Survey platforms tracking sustainability indicators across global supply chains, with multi-language support and role-based access.

Technology Stacks We expertise in

We choose the right tools for each project—from front-end frameworks and backend runtimes to databases, cloud platforms, and DevOps tooling. Every stack decision is driven by your project's requirements: performance needs, team familiarity, long-term maintainability, and cost.

Engagement Models

We tailor delivery to your team structure and ownership preference. For full process detail, review the dedicated engagement model page.

Outsourcing

- Outcome-based delivery ownership

- Managed roadmap, QA, and releases

- Best for end-to-end product builds

Staff Augmentation

- Engineers integrated into your team

- You keep sprint and release control

- Best for scaling delivery capacity fast

Tech Consulting

- Architecture and platform strategy guidance

- Roadmap, risk, and cost optimization

- Best for audits, modernization, and decision support

Useful Reads

Frequently Asked Questions

Common questions about data engineering, ETL/ELT pipelines, data quality, and how we help businesses build reliable data infrastructure.